- Home

- Snowflake

- SnowPro Core Certification

- SnowPro-Core

- SnowPro-Core - SnowPro Core Certification Exam

Snowflake SnowPro-Core SnowPro Core Certification Exam Exam Practice Test

SnowPro Core Certification Exam Questions and Answers

What is a responsibility of Snowflake’s virtual warehouses?

Options:

Infrastructure management

Metadata management

Query execution

Query parsing and optimization

Permanent storage of micro-partitions

Answer:

CExplanation:

Snowflake’s virtual warehouses are responsible for query execution. They are clusters of compute resources that execute SQL statements, perform DML operations, and load data into tables

Where is Snowflake metadata stored?

Options:

Within the data files

In the virtual warehouse layer

In the cloud services layer

In the remote storage layer

Answer:

CExplanation:

Snowflake’s architecture is divided into three layers: database storage, query processing, and cloud services. The metadata, which includes information about the structure of the data, the SQL operations performed, and the service-level policies, is stored in the cloud services layer. This layer acts as the brain of the Snowflake environment, managing metadata, query optimization, and transaction coordination.

Which Snowflake edition enables data sharing only through Snowflake Support?

Options:

Virtual Private Snowflake

Business Critical

Enterprise

Standard

Answer:

AExplanation:

The Snowflake edition that enables data sharing only through Snowflake Support is the Virtual Private Snowflake (VPS). By default, VPS does not permit data sharing outside of the VPS environment, but it can be enabled through Snowflake Support4.

Which feature allows a user the ability to control the organization of data in a micro-partition?

Options:

Range Partitioning

Search Optimization Service

Automatic Clustering

Horizontal Partitioning

Answer:

CExplanation:

Automatic Clustering is a feature that allows users to control the organization of data within micro-partitions in Snowflake. By defining clustering keys, Snowflake can automatically reorganize the data in micro-partitions to optimize query performance1.

What can a Snowflake user do with the information included in the details section of a Query Profile?

Options:

Determine the total duration of the query.

Determine the role of the user who ran the query.

Determine the source system that the queried table is from.

Determine if the query was on structured or semi-structured data.

Answer:

AExplanation:

The details section of a Query Profile in Snowflake provides users with various statistics and information about the execution of a query. One of the key pieces of information that can be determined from this section is the total duration of the query, which helps in understanding the performance and identifying potential bottlenecks. References: [COF-C02] SnowPro Core Certification Exam Study Guide

A Snowflake user has been granted the create data EXCHANGE listing privilege with their role.

Which tasks can this user now perform on the Data Exchange? (Select TWO).

Options:

Rename listings.

Delete provider profiles.

Modify listings properties.

Modify incoming listing access requests.

Submit listings for approval/publishing.

Answer:

C, EExplanation:

With the create data EXCHANGE listing privilege, a Snowflake user can modify the properties of listings and submit them for approval or publishing on the Data Exchange. This allows them to manage and share data sets with consumers effectively. References: Based on general data exchange practices in cloud services as of 2021.

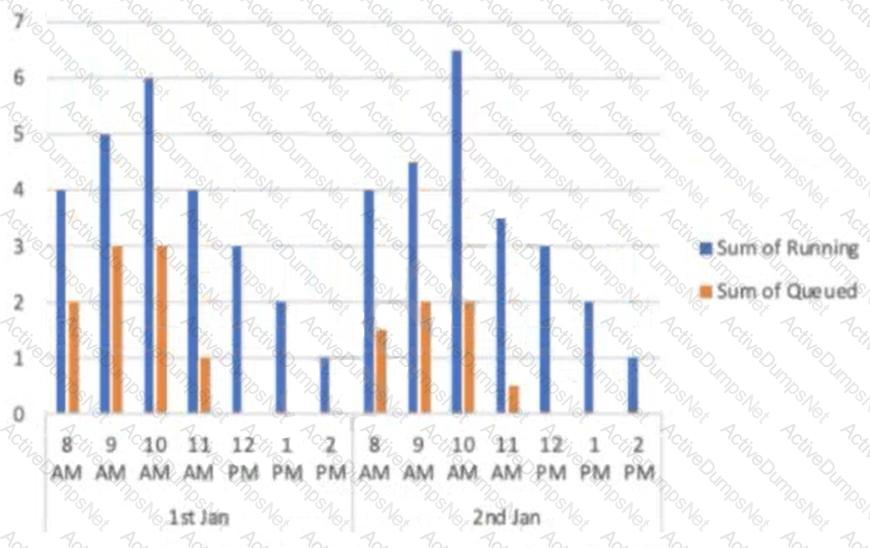

A user has a standard multi-cluster warehouse auto-scaling policy in place.

Which condition will trigger a cluster to shut-down?

Options:

When after 2-3 consecutive checks the system determines that the load on the most-loaded cluster could be redistributed.

When after 5-6 consecutive checks the system determines that the load on the most-loaded cluster could be redistributed.

When after 5-6 consecutive checks the system determines that the load on the least-loaded cluster could be redistributed.

When after 2-3 consecutive checks the system determines that the load on the least-loaded cluster could be redistributed.

Answer:

DExplanation:

In a standard multi-cluster warehouse with auto-scaling, a cluster will shut down when, after 2-3 consecutive checks, the system determines that the load on the least-loaded cluster could be redistributed to other clusters. This ensures efficient resource utilization and cost management. References: [COF-C02] SnowPro Core Certification Exam Study Guide

Which parameter can be used to instruct a COPY command to verify data files instead of loading them into a specified table?

Options:

STRIP_NULL_VALUES

SKIP_BYTE_ORDER_MARK

REPLACE_INVALID_CHARACTERS

VALIDATION_MODE

Answer:

DExplanation:

The VALIDATION_MODE parameter can be used with the COPY command to verify data files without loading them into the specified table. This parameter allows users to check for errors in the files

What action can a user take to address query concurrency issues?

Options:

Enable the query acceleration service.

Enable the search optimization service.

Add additional clusters to the virtual warehouse

Resize the virtual warehouse to a larger instance size.

Answer:

CExplanation:

To address query concurrency issues, a user can add additional clusters to the virtual warehouse. This allows for the distribution of queries across multiple clusters, reducing the load on any single cluster and improving overall query performance2.

Which privilege is required for a role to be able to resume a suspended warehouse if auto-resume is not enabled?

Options:

USAGE

OPERATE

MONITOR

MODIFY

Answer:

BExplanation:

The OPERATE privilege is required for a role to resume a suspended warehouse if auto-resume is not enabled. This privilege allows the role to start, stop, suspend, or resume a virtual warehouse3.

References: [COF-C02] SnowPro Core Certification Exam Study Guide

Credit charges for Snowflake virtual warehouses are calculated based on which of the following considerations? (Choose two.)

Options:

The number of queries executed

The number of active users assigned to the warehouse

The size of the virtual warehouse

The length of time the warehouse is running

The duration of the queries that are executed

Answer:

C, DExplanation:

Credit charges for Snowflake virtual warehouses are calculated based on the size of the virtual warehouse and the length of time the warehouse is running. The size determines the compute resources available, and charges are incurred for the time these resources are utilized

Which of the following describes the Snowflake Cloud Services layer?

Options:

Coordinates activities in the Snowflake account

Executes queries submitted by the Snowflake account users

Manages quotas on the Snowflake account storage

Manages the virtual warehouse cache to speed up queries

Answer:

AExplanation:

The Snowflake Cloud Services layer coordinates activities within the Snowflake account. It is responsible for tasks such as authentication, infrastructure management, metadata management, query parsing and optimization, and access control. References: Based on general cloud database architecture knowledge.

What happens when a database is cloned?

Options:

It does not retain any privileges granted on the source object.

It replicates all granted privileges on the corresponding source objects.

It replicates all granted privileges on the corresponding child objects.

It replicates all granted privileges on the corresponding child schema objects.

Answer:

AExplanation:

When a database is cloned in Snowflake, it does not retain any privileges that were granted on the source object. The clone will need to have privileges reassigned as necessary for users to access it. References: [COF-C02] SnowPro Core Certification Exam Study Guide

A user needs to create a materialized view in the schema MYDB.MYSCHEMA. Which statements will provide this access?

Options:

GRANT ROLE MYROLE TO USER USER1;

GRANT CREATE MATERIALIZED VIEW ON SCHEMA MYDB.MYSCHEMA TO ROLE MYROLE;

GRANT ROLE MYROLE TO USER USER1;

GRANT CREATE MATERIALIZED VIEW ON SCHEMA MYDB.MYSCHEMA TO USER USER1;

GRANT ROLE MYROLE TO USER USER1;

GRANT CREATE MATERIALIZED VIEW ON SCHEMA MYDB. K"-'SCHEMA TO USER! ;

GRANT ROLE MYROLE TO USER USER1;

GRANT CREATE MATERIALIZED VIEW ON SCHEMA MYDB.MYSCHEMA TO MYROLE;

Answer:

AExplanation:

To provide a user with the necessary access to create a materialized view in a schema, the user must be granted a role that has the CREATE MATERIALIZED VIEW privilege on that schema. First, the role is granted to the user, and then the privilege is granted to the role

What service is provided as an integrated Snowflake feature to enhance Multi-Factor Authentication (MFA) support?

Options:

Duo Security

OAuth

Okta

Single Sign-On (SSO)

Answer:

AExplanation:

Snowflake provides Multi-Factor Authentication (MFA) support as an integrated feature, powered by the Duo Security service. This service is managed completely by Snowflake, and users do not need to sign up separately with Duo1

How can a user change which columns are referenced in a view?

Options:

Modify the columns in the underlying table

Use the ALTER VIEW command to update the view

Recreate the view with the required changes

Materialize the view to perform the changes

Answer:

CExplanation:

In Snowflake, to change the columns referenced in a view, the view must be recreated with the required changes. The ALTER VIEW command does not allow changing the definition of a view; it can only be used to rename a view, convert it to or from a secure view, or add, overwrite, or remove a comment for a view. Therefore, the correct approach is to drop the existing view and create a new one with the desired column references.

What does Snowflake recommend regarding database object ownership? (Select TWO).

Options:

Create objects with ACCOUNTADMIN and do not reassign ownership.

Create objects with SYSADMIN.

Create objects with SECURITYADMIN to ease granting of privileges later.

Create objects with a custom role and grant this role to SYSADMIN.

Use only MANAGED ACCESS SCHEMAS for66 objects owned by ACCOUNTADMIN.

Answer:

B, DExplanation:

Snowflake recommends creating objects with a role that has the necessary privileges and is not overly permissive. SYSADMIN is typically used for managing system-level objects and operations. Creating objects with a custom role and granting this role to SYSADMIN allows for more granular control and adherence to the principle of least privilege. References: Based on best practices for database object ownership and role management.

Which stream type can be used for tracking the records in external tables?

Options:

Append-only

External

Insert-only

Standard

Answer:

BExplanation:

The stream type that can be used for tracking the records in external tables is ‘External’. This type of stream is specifically designed to track changes in external tables

Data storage for individual tables can be monitored using which commands and/or objects? (Choose two.)

Options:

SHOW STORAGE BY TABLE;

SHOW TABLES;

Information Schema -> TABLE_HISTORY

Information Schema -> TABLE_FUNCTION

Information Schema -> TABLE_STORAGE_METRICS

Answer:

A, EExplanation:

To monitor data storage for individual tables, the commands and objects that can be used are ‘SHOW STORAGE BY TABLE;’ and the Information Schema view ‘TABLE_STORAGE_METRICS’. These tools provide detailed information about the storage utilization for tables. References: Snowflake Documentation

Which features make up Snowflake's column level security? (Select TWO).

Options:

Continuous Data Protection (CDP)

Dynamic Data Masking

External Tokenization

Key pair authentication

Row access policies

Answer:

B, CExplanation:

Snowflake’s column level security features include Dynamic Data Masking and External Tokenization. Dynamic Data Masking uses masking policies to selectively mask data at query time, while External Tokenization allows for the tokenization of data before loading it into Snowflake and detokenizing it at query runtime5.

How does a scoped URL expire?

Options:

When the data cache clears.

When the persisted query result period ends.

The encoded URL access is permanent.

The length of time is specified in the expiration_time argument.

Answer:

BExplanation:

A scoped URL expires when the persisted query result period ends, which is typically after the results cache expires. This is currently set to 24 hours

What is the minimum Snowflake edition needed for database failover and fail-back between Snowflake accounts for business continuity and disaster recovery?

Options:

Standard

Enterprise

Business Critical

Virtual Private Snowflake

Answer:

CExplanation:

The minimum Snowflake edition required for database failover and fail-back between Snowflake accounts for business continuity and disaster recovery is the Business Critical edition. References: Snowflake Documentation3.

What is used to diagnose and troubleshoot network connections to Snowflake?

Options:

SnowCD

Snowpark

Snowsight

SnowSQL

Answer:

AExplanation:

SnowCD (Snowflake Connectivity Diagnostic Tool) is used to diagnose and troubleshoot network connections to Snowflake. It runs a series of connection checks to evaluate the network connection to Snowflake

Using variables in Snowflake is denoted by using which SQL character?

Options:

@

&

$

#

Answer:

CExplanation:

∗∗VeryComprehensiveExplanation=InSnowflake,variablesaredenotedbyadollarsign(). Variables can be used in SQL statements where a literal constant is allowed, and they must be prefixed with a $ sign to distinguish them from bind values and column names.

What is cached during a query on a virtual warehouse?

Options:

All columns in a micro-partition

Any columns accessed during the query

The columns in the result set of the query

All rows accessed during the query

Answer:

CExplanation:

During a query on a virtual warehouse, the columns in the result set of the query are cached. This allows for faster retrieval of data if the same or a similar query is run again, as the system can retrieve the data from the cache rather than reprocessing the entire query. References: [COF-C02] SnowPro Core Certification Exam Study Guide

Which parameter prevents streams on tables from becoming stale?

Options:

MAXDATAEXTENSIONTIMEINDAYS

MTN_DATA_RETENTION_TTME_TN_DAYS

LOCK_TIMEOUT

STALE_AFTER

Answer:

AExplanation:

The parameter that prevents streams on tables from becoming stale is MAXDATAEXTENSIONTIMEINDAYS. This parameter specifies the maximum number of days for which Snowflake can extend the data retention period for the table to prevent streams on the table from becoming stale4.

Which of the following are characteristics of security in Snowflake?

Options:

Account and user authentication is only available with the Snowflake Business Critical edition.

Support for HIPAA and GDPR compliance is available for UI Snowflake editions.

Periodic rekeying of encrypted data is available with the Snowflake Enterprise edition and higher

Private communication to internal stages is allowed in the Snowflake Enterprise edition and higher.

Answer:

CExplanation:

One of the security features of Snowflake includes the periodic rekeying of encrypted data, which is available with the Snowflake Enterprise edition and higher2. This ensures that the encryption keys are rotated regularly to maintain a high level of security. References: [COF-C02] SnowPro Core Certification Exam Study Guide

If a multi-cluster warehouse is using an economy scaling policy, how long will queries wait in the queue before another cluster is started?

Options:

1 minute

2 minutes

6 minutes

8 minutes

Answer:

BExplanation:

In a multi-cluster warehouse with an economy scaling policy, queries will wait in the queue for 2 minutes before another cluster is started. This is to minimize costs by allowing queries to queue up for a short period before adding additional compute resources. References: [COF-C02] SnowPro Core Certification Exam Study Guide

Which role has the ability to create and manage users and roles?

Options:

ORGADMIN

USERADMIN

SYSADMIN

SECURITYADMIN

Answer:

BExplanation:

The USERADMIN role in Snowflake has the ability to create and manage users and roles within the Snowflake environment. This role is specifically dedicated to user and role management and creation

Which kind of Snowflake table stores file-level metadata for each file in a stage?

Options:

Directory

External

Temporary

Transient

Answer:

AExplanation:

The kind of Snowflake table that stores file-level metadata for each file in a stage is a directory table. A directory table is an implicit object layered on a stage and stores file-level metadata about the data files in the stage3.

Which stages are used with the Snowflake PUT command to upload files from a local file system? (Choose three.)

Options:

Schema Stage

User Stage

Database Stage

Table Stage

External Named Stage

Internal Named Stage

Answer:

B, D, FExplanation:

The Snowflake PUT command is used to upload files from a local file system to Snowflake stages, specifically the user stage, table stage, and internal named stage. These stages are where the data files are temporarily stored before being loaded into Snowflake tables

What is the Fail-safe period for a transient table in the Snowflake Enterprise edition and higher?

Options:

0 days

1 day

7 days

14 days

Answer:

AExplanation:

The Fail-safe period for a transient table in Snowflake, regardless of the edition (including Enterprise edition and higher), is 0 days. Fail-safe is a data protection feature that provides additional retention beyond the Time Travel period for recovering data in case of accidental deletion or corruption. However, transient tables are designed for temporary or short-term use and do not benefit from the Fail-safe feature, meaning that once their Time Travel period expires, data cannot be recovered.

References:

- Snowflake Documentation: Understanding Fail-safe

When referring to User-Defined Function (UDF) names in Snowflake, what does the term overloading mean?

Options:

There are multiple SOL UDFs with the same names and the same number of arguments.

There are multiple SQL UDFs with the same names and the same number of argument types.

There are multiple SQL UDFs with the same names but with a different number of arguments or argument types.

There are multiple SQL UDFs with different names but the same number of arguments or argument types.

Answer:

CExplanation:

In Snowflake, overloading refers to the creation of multiple User-Defined Functions (UDFs) with the same name but differing in the number or types of their arguments. This feature allows for more flexible function usage, as Snowflake can differentiate between functions based on the context of their invocation, such as the types or the number of arguments passed. Overloading helps to create more adaptable and readable code, as the same function name can be used for similar operations on different types of data.

References:

- Snowflake Documentation: User-Defined Functions

There are two Snowflake accounts in the same cloud provider region: one is production and the other is non-production. How can data be easily transferred from the production account to the non-production account?

Options:

Clone the data from the production account to the non-production account.

Create a data share from the production account to the non-production account.

Create a subscription in the production account and have it publish to the non-production account.

Create a reader account using the production account and link the reader account to the non-production account.

Answer:

BExplanation:

To easily transfer data from a production account to a non-production account in Snowflake within the same cloud provider region, creating a data share is the most efficient approach. Data sharing allows for live, read-only access to selected data objects from the production account to the non-production account without the need to duplicate or move the actual data. This method facilitates seamless access to the data for development, testing, or analytics purposes in the non-production environment.

References:

- Snowflake Documentation: Data Sharing

The VALIDATE table function has which parameter as an input argument for a Snowflake user?

Options:

Last_QUERY_ID

CURRENT_STATEMENT

UUID_STRING

JOB_ID

Answer:

CExplanation:

The VALIDATE table function in Snowflake would typically use a unique identifier, such as a UUID_STRING, as an input argument. This function is designed to validate the data within a table against a set of constraints or conditions, often requiring a specific identifier to reference the particular data or job being validated.

References:

- There is no direct reference to a VALIDATE table function with these specific parameters in Snowflake documentation. It seems like a theoretical example for understanding function arguments. Snowflake documentation on UDFs and system functions can provide guidance on how to create and use custom functions for similar purposes.

A user wants to add additional privileges to the system-defined roles for their virtual warehouse. How does Snowflake recommend they accomplish this?

Options:

Grant the additional privileges to a custom role.

Grant the additional privileges to the ACCOUNTADMIN role.

Grant the additional privileges to the SYSADMIN role.

Grant the additional privileges to the ORGADMIN role.

Answer:

AExplanation:

Snowflake recommends enhancing the granularity and management of privileges by creating and utilizing custom roles. When additional privileges are needed beyond those provided by the system-defined roles for a virtual warehouse or any other resource, these privileges should be granted to a custom role. This approach allows for more precise control over access rights and the ability to tailor permissions to the specific needs of different user groups or applications within the organization, while also maintaining the integrity and security model of system-defined roles.

References:

- Snowflake Documentation: Roles and Privileges

What type of function returns one value for each Invocation?

Options:

Aggregate

Scalar

Table

Window

Answer:

BExplanation:

Scalar functions in Snowflake (and SQL in general) are designed to return a single value for each invocation. They operate on a single value and return a single result, making them suitable for a wide range of data transformations and calculations within queries.

References:

- Snowflake Documentation: Functions

Which view can be used to determine if a table has frequent row updates or deletes?

Options:

TABLES

TABLE_STORAGE_METRICS

STORAGE_DAILY_HISTORY

STORAGE USAGE

Answer:

BExplanation:

The TABLE_STORAGE_METRICS view can be used to determine if a table has frequent row updates or deletes. This view provides detailed metrics on the storage utilization of tables within Snowflake, including metrics that reflect the impact of DML operations such as updates and deletes on table storage. For example, metrics related to the number of active and deleted rows can help identify tables that experience high levels of row modifications, indicating frequent updates or deletions.

References:

- Snowflake Documentation: TABLE_STORAGE_METRICS View

Which privilege is required to use the search optimization service in Snowflake?

Options:

GRANT SEARCH OPTIMIZATION ON SCHEMA

GRANT SEARCH OPTIMIZATION ON DATABASE

GRANT ADD SEARCH OPTIMIZATION ON SCHEMA

GRANT ADD SEARCH OPTIMIZATION ON DATABASE

Answer:

CExplanation:

To utilize the search optimization service in Snowflake, the correct syntax for granting privileges to a role involves specific commands that include adding search optimization capabilities:

- Option C: GRANT ADD SEARCH OPTIMIZATION ON SCHEMA

TO ROLE . This command grants the specified role the ability to implement search optimization at the schema level, which is essential for enhancing search capabilities within that schema.

Options A and B do not include the correct verb "ADD," which is necessary for this specific type of grant command in Snowflake. Option D incorrectly mentions the database level, as search optimization privileges are typically configured at the schema level, not the database level.References: Snowflake documentation on the use of GRANT statements for configuring search optimization.

How can a user get the MOST detailed information about individual table storage details in Snowflake?

Options:

SHOW TABLES command

SHOW EXTERNAL TABLES command

TABLES view

TABLE STORAGE METRICS view

Answer:

DExplanation:

To obtain the most detailed information about individual table storage details in Snowflake, the TABLE STORAGE METRICS view is the recommended option. This view provides comprehensive metrics on storage usage, including data size, time travel size, fail-safe size, and other relevant storage metrics for each table. This level of detail is invaluable for monitoring, managing, and optimizing storage costs and performance.

References:

- Snowflake Documentation: Information Schema

Which statement accurately describes Snowflake's architecture?

Options:

It uses a local data repository for all compute nodes in the platform.

It is a blend of shared-disk and shared-everything database architectures.

It is a hybrid of traditional shared-disk and shared-nothing database architectures.

It reorganizes loaded data into internal optimized, compressed, and row-based format.

Answer:

CExplanation:

Snowflake's architecture is unique in that it combines elements of both traditional shared-disk and shared-nothing database architectures. This hybrid approach allows Snowflake to offer the scalability and performance benefits of a shared-nothing architecture (with compute and storage separated) while maintaining the simplicity and flexibility of a shared-disk architecture in managing data across all nodes in the system. This results in an architecture that provides on-demand scalability, both vertically and horizontally, without sacrificing performance or data cohesion.

References:

- Snowflake Documentation: Snowflake Architecture

What are characteristics of reader accounts in Snowflake? (Select TWO).

Options:

Reader account users cannot add new data to the account.

Reader account users can share data to other reader accounts.

A single reader account can consume data from multiple provider accounts.

Data consumers are responsible for reader account setup and data usage costs.

Reader accounts enable data consumers to access and query data shared by the provider.

Answer:

A, EExplanation:

Characteristics of reader accounts in Snowflake include:

- A. Reader account users cannot add new data to the account: Reader accounts are intended for data consumption only. Users of these accounts can query and analyze the data shared with them but cannot upload or add new data to the account.

- E. Reader accounts enable data consumers to access and query data shared by the provider: One of the primary purposes of reader accounts is to allow data consumers to access and perform queries on the data shared by another Snowflake account, facilitating secure and controlled data sharing.

References:

- Snowflake Documentation: Reader Accounts

What is the only supported character set for loading and unloading data from all supported file formats?

Options:

UTF-8

UTF-16

ISO-8859-1

WINDOWS-1253

Answer:

AExplanation:

UTF-8 is the only supported character set for loading and unloading data from all supported file formats in Snowflake. UTF-8 is a widely used encoding that supports a large range of characters from various languages, making it suitable for internationalization and ensuring data compatibility across different systems and platforms.

References:

- Snowflake Documentation: Data Loading and Unloading

Which SQL command can be used to verify the privileges that are granted to a role?

Options:

SHOW GRANTS ON ROLE

SHOW ROLES

SHOW GRANTS TO ROLE

SHOW GRANTS FOR ROLE

Answer:

CExplanation:

To verify the privileges that have been granted to a specific role in Snowflake, the correct SQL command is SHOW GRANTS TO ROLE <Role Name>. This command lists all the privileges granted to the specified role, including access to schemas, tables, and other database objects. This is a useful command for administrators and users with sufficient privileges to audit and manage role permissions within the Snowflake environment.

References:

- Snowflake Documentation: SHOW GRANTS

Why would a Snowflake user decide to use a materialized view instead of a regular view?

Options:

The base tables do not change frequently.

The results of the view change often.

The query is not resource intensive.

The query results are not used frequently.

Answer:

AExplanation:

A Snowflake user would decide to use a materialized view instead of a regular view primarily when the base tables do not change frequently. Materialized views store the result of the view query and update it as the underlying data changes, making them ideal for situations where the data is relatively static and query performance is critical. By precomputing and storing the query results, materialized views can significantly reduce query execution times for complex aggregations, joins, and calculations.

References:

- Snowflake Documentation: Materialized Views

Which Snowflake edition offers the highest level of security for organizations that have the strictest requirements?

Options:

Standard

Enterprise

Business Critical

Virtual Private Snowflake (VPS)

Answer:

DExplanation:

The Virtual Private Snowflake (VPS) edition offers the highest level of security for organizations with the strictest security requirements. This edition provides a dedicated and isolated instance of Snowflake, including enhanced security features and compliance certifications to meet the needs of highly regulated industries or any organization requiring the utmost in data protection and privacy.

References:

- Snowflake Documentation: Snowflake Editions

Which function will provide the proxy information needed to protect Snowsight?

Options:

SYSTEMADMIN_TAG

SYSTEM$GET_PRIVATELINK

SYSTEMSALLONTLIST

SYSTEMAUTHORIZE

Answer:

BExplanation:

The SYSTEM$GET_PRIVATELINK function in Snowflake provides proxy information necessary for configuring PrivateLink connections, which can protect Snowsight as well as other Snowflake services. PrivateLink enhances security by allowing Snowflake to be accessed via a private connection within a cloud provider’s network, reducing exposure to the public internet.

References:

- Snowflake Documentation: PrivateLink Setup

What are characteristics of transient tables in Snowflake? (Select TWO).

Options:

Transient tables have a Fail-safe period of 7 days.

Transient tables can be cloned to permanent tables.

Transient tables persist until they are explicitly dropped.

Transient tables can be altered to make them permanent tables.

Transient tables have Time Travel retention periods of 0 or 1 day.

Answer:

B, CExplanation:

Transient tables in Snowflake are designed for temporary or intermediate workloads with the following characteristics:

- B. Transient tables can be cloned to permanent tables: This feature allows users to create copies of transient tables for permanent use, providing flexibility in managing data lifecycles.

- C. Transient tables persist until they are explicitly dropped: Unlike temporary tables that exist for the duration of a session, transient tables remain in the database until explicitly removed by a user, offering more durability for short-term data storage needs.

References:

- Snowflake Documentation: Transient Tables

What is it called when a customer managed key is combined with a Snowflake managed key to create a composite key for encryption?

Options:

Hierarchical key model

Client-side encryption

Tri-secret secure encryption

Key pair authentication

Answer:

CExplanation:

Tri-secret secure encryption is a security model employed by Snowflake that involves combining a customer-managed key with a Snowflake-managed key to create a composite key for encrypting data. This model enhances data security by requiring both the customer-managed key and the Snowflake-managed key to decrypt data, thus ensuring that neither party can access the data independently. It represents a balanced approach to key management, leveraging both customer control and Snowflake's managed services for robust data encryption.

References:

- Snowflake Documentation: Encryption and Key Management

When floating-point number columns are unloaded to CSV or JSON files, Snowflake truncates the values to approximately what?

Options:

(12,2)

(10,4)

(14,8)

(15,9)

Answer:

DExplanation:

When unloading floating-point number columns to CSV or JSON files, Snowflake truncates the values to approximately 15 significant digits with 9 digits following the decimal point, which can be represented as (15,9). This ensures a balance between accuracy and efficiency in representing floating-point numbers in text-based formats, which is essential for data interchange and processing applications that consume these files.

References:

- Snowflake Documentation: Data Unloading Considerations

What is the MINIMUM permission needed to access a file URL from an external stage?

Options:

MODIFY

READ

SELECT

USAGE

Answer:

DExplanation:

To access a file URL from an external stage in Snowflake, the minimum permission required is USAGE on the stage object. USAGE permission allows a user to reference the stage in SQL commands, necessary for actions like listing files or loading data from the stage, but does not permit the user to alter or drop the stage.

References:

- Snowflake Documentation: Access Control

The effects of query pruning can be observed by evaluating which statistics? (Select TWO).

Options:

Partitions scanned

Partitions total

Bytes scanned

Bytes read from result

Bytes written

Answer:

A, CExplanation:

Query pruning in Snowflake refers to the optimization technique where the system reduces the amount of data scanned by a query based on the query conditions. This typically involves skipping unnecessary data partitions that do not contribute to the query result. The effectiveness of this technique can be observed through:

- Option A: Partitions scanned. This statistic indicates how many data partitions were actually scanned as a result of query pruning, showing the optimization in action.

- Option C: Bytes scanned. This measures the volume of data physically read during query execution, and a reduction in this number indicates effective query pruning, as fewer bytes are read when unnecessary partitions are skipped.

Options B, D, and E do not directly relate to observing the effects of query pruning. "Partitions total" shows the total available, not the impact of pruning, while "Bytes read from result" and "Bytes written" relate to output rather than the efficiency of data scanning.References: Snowflake documentation on performance tuning and query optimization techniques, specifically how query pruning affects data access.

What happens when a network policy includes values that appear in both the allowed and blocked IP address list?

Options:

Those IP addresses are allowed access to the Snowflake account as Snowflake applies the allowed IP address list first.

Those IP addresses are denied access lei the Snowflake account as Snowflake applies the blocked IP address list first.

Snowflake issues an alert message and adds the duplicate IP address values lo both 'he allowed and blocked IP address lists.

Snowflake issues an error message and adds the duplicate IP address values to both the allowed and blocked IP address list

Answer:

BExplanation:

In Snowflake, when setting up a network policy that specifies both allowed and blocked IP address lists, if an IP address appears in both lists, access from that IP address will be denied. The reason is that Snowflake prioritizes security, and the presence of an IP address in the blocked list indicates it should not be allowed regardless of its presence in the allowed list. This ensures that access controls remain stringent and that any potentially unsafe IP addresses are not inadvertently permitted access.

References:

- Snowflake Documentation: Network Policies

Which URL provides access to files in Snowflake without authorization?

Options:

File URL

Scoped URL

Pre-signed URL

Scoped file URL

Answer:

CExplanation:

A Pre-signed URL provides access to files stored in Snowflake without requiring authorization at the time of access. This feature allows users to generate a URL with a limited validity period that grants temporary access to a file in a secure manner. It's particularly useful for sharing data with external parties or applications without the need for them to authenticate directly with Snowflake.

References:

- Snowflake Documentation: Using Pre-signed URLs

What information does the Query Profile provide?

Options:

Graphical representation of the data model

Statistics for each component of the processing plan

Detailed Information about I he database schema

Real-time monitoring of the database operations

Answer:

BExplanation:

The Query Profile in Snowflake provides a graphical representation and statistics for each component of the query's execution plan. This includes details such as the execution time, the number of rows processed, and the amount of data scanned for each operation within the query. The Query Profile is a crucial tool for understanding and optimizing the performance of queries, as it helps identify potential bottlenecks and inefficiencies.

References:

- Snowflake Documentation: Understanding the Query Profile

What will happen if a Snowflake user increases the size of a suspended virtual warehouse?

Options:

The provisioning of new compute resources for the warehouse will begin immediately.

The warehouse will remain suspended but new resources will be added to the query acceleration service.

The provisioning of additional compute resources will be in effect when the warehouse is next resumed.

The warehouse will resume immediately and start to share the compute load with other running virtual warehouses.

Answer:

CExplanation:

When a Snowflake user increases the size of a suspended virtual warehouse, the changes to compute resources are queued but do not take immediate effect. The provisioning of additional compute resources occurs only when the warehouse is resumed. This ensures that resources are allocated efficiently, aligning with Snowflake's commitment to cost-effective and on-demand scalability.

References:

- Snowflake Documentation: Virtual Warehouses

A user has semi-structured data to load into Snowflake but is not sure what types of operations will need to be performed on the data. Based on this situation, what type of column does Snowflake recommend be used?

Options:

ARRAY

OBJECT

TEXT

VARIANT

Answer:

DExplanation:

When dealing with semi-structured data in Snowflake, and the specific types of operations to be performed on the data are not yet determined, Snowflake recommends using the VARIANT data type. The VARIANT type is highly flexible and capable of storing data in multiple formats, including JSON, AVRO, BSON, and more, within a single column. This flexibility allows users to perform various operations on the data, including querying and manipulation of nested data structures without predefined schemas.

References:

- Snowflake Documentation: Semi-structured Data Types

How does Snowflake describe its unique architecture?

Options:

A single-cluster shared data architecture using a central data repository and massively parallel processing (MPP)

A multi-duster shared nothing architecture using a soloed data repository and massively parallel processing (MPP)

A single-cluster shared nothing architecture using a sliced data repository and symmetric multiprocessing (SMP)

A multi-cluster shared nothing architecture using a siloed data repository and symmetric multiprocessing (SMP)

Answer:

AExplanation:

Snowflake's unique architecture is described as a multi-cluster, shared data architecture that leverages massively parallel processing (MPP). This architecture separates compute and storage resources, enabling Snowflake to scale them independently. It does not use a single cluster or rely solely on symmetric multiprocessing (SMP); rather, it uses a combination of shared-nothing architecture for compute clusters (virtual warehouses) and a centralized storage layer for data, optimizing for both performance and scalability.

References:

- Snowflake Documentation: Snowflake Architecture Overview

When unloading data, which file format preserves the data values for floating-point number columns?

Options:

Avro

CSV

JSON

Parquet

Answer:

DExplanation:

When unloading data, the Parquet file format is known for its efficiency in preserving the data values for floating-point number columns. Parquet is a columnar storage file format that offers high compression ratios and efficient data encoding schemes. It is especially effective for floating-point data, as it maintains high precision and supports efficient querying and analysis.

References:

- Snowflake Documentation: Using the Parquet File Format for Unloading Data

For Directory tables, what stage allows for automatic refreshing of metadata?

Options:

User stage

Table stage

Named internal stage

Named external stage

Answer:

DExplanation:

For directory tables, a named external stage allows for the automatic refreshing of metadata. This capability is particularly useful when dealing with files stored on external storage services (like Amazon S3, Google Cloud Storage, or Azure Blob Storage) and accessed through Snowflake. The external stage references these files, and the directory table's metadata can be automatically updated to reflect changes in the underlying files.

References:

- Snowflake Documentation: External Stages

What does the worksheet and database explorer feature in Snowsight allow users to do?

Options:

Add or remove users from a worksheet.

Move a worksheet to a folder or a dashboard.

Combine multiple worksheets into a single worksheet.

Tag frequently accessed worksheets for ease of access.

Answer:

DExplanation:

The worksheet and database explorer feature in Snowsight allows users to tag frequently accessed worksheets for ease of access. This functionality helps users organize and quickly navigate to the worksheets they use most often, enhancing productivity and streamlining the data exploration and analysis process within Snowsight, Snowflake's web-based query and visualization interface.

References:

- Snowflake Documentation: Snowsight (UI for Snowflake)

Which command should be used to unload all the rows from a table into one or more files in a named stage?

Options:

COPY INTO

GET

INSERT INTO

PUT

Answer:

AExplanation:

To unload data from a table into one or more files in a named stage, the COPY INTO <location> command should be used. This command exports the result of a query, such as selecting all rows from a table, into files stored in the specified stage. The COPY INTO command is versatile, supporting various file formats and compression options for efficient data unloading.

References:

- Snowflake Documentation: COPY INTO Location

What are value types that a VARIANT column can store? (Select TWO)

Options:

STRUCT

OBJECT

BINARY

ARRAY

CLOB

Answer:

B, DExplanation:

A VARIANT column in Snowflake can store semi-structured data types. This includes:

- B. OBJECT: An object is a collection of key-value pairs in JSON, and a VARIANT column can store this type of data structure.

- D. ARRAY: An array is an ordered list of zero or more values, which can be of any variant-supported data type, including objects or other arrays.

The VARIANT data type is specifically designed to handle semi-structured data like JSON, Avro, ORC, Parquet, or XML, allowing for the storage of nested and complex data structures.

References:

- Snowflake Documentation on Semi-Structured Data Types

- SnowPro® Core Certification Study Guide

Which command can be used to load data into an internal stage?

Options:

LOAD

copy

GET

PUT

Answer:

DExplanation:

The PUT command is used to load data into an internal stage in Snowflake. This command uploads data files from a local file system to a named internal stage, making the data available for subsequent loading into a Snowflake table using the COPY INTO command.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Data Loading

When reviewing a query profile, what is a symptom that a query is too large to fit into the memory?

Options:

A single join node uses more than 50% of the query time

Partitions scanned is equal to partitions total

An AggregateOperacor node is present

The query is spilling to remote storage

Answer:

DExplanation:

When a query in Snowflake is too large to fit into the available memory, it will start spilling to remote storage. This is an indication that the memory allocated for the query is insufficient for its execution, and as a result, Snowflake uses remote disk storage to handle the overflow. This spill to remote storage can lead to slower query performance due to the additional I/O operations required.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Query Profile1

- Snowpro Core Certification Exam Flashcards2

What happens when an external or an internal stage is dropped? (Select TWO).

Options:

When dropping an external stage, the files are not removed and only the stage is dropped

When dropping an external stage, both the stage and the files within the stage are removed

When dropping an internal stage, the files are deleted with the stage and the files are recoverable

When dropping an internal stage, the files are deleted with the stage and the files are not recoverable

When dropping an internal stage, only selected files are deleted with the stage and are not recoverable

Answer:

A, DExplanation:

When an external stage is dropped in Snowflake, the reference to the external storage location is removed, but the actual files within the external storage (like Amazon S3, Google Cloud Storage, or Microsoft Azure) are not deleted. This means that the data remains intact in the external storage location, and only the stage object in Snowflake is removed.

On the other hand, when an internal stage is dropped, any files that were uploaded to the stage are deleted along with the stage itself. These files are not recoverable once the internal stage is dropped, as they are permanently removed from Snowflake’s storage.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Stages

What is a machine learning and data science partner within the Snowflake Partner Ecosystem?

Options:

Informatica

Power Bl

Adobe

Data Robot

Answer:

DExplanation:

Data Robot is recognized as a machine learning and data science partner within the Snowflake Partner Ecosystem. It provides an enterprise AI platform that enables users to build and deploy accurate predictive models quickly. As a partner, Data Robot integrates with Snowflake to enhance data science capabilities2.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Machine Learning & Data Science Partners

https://docs.snowflake.com/en/user-guide/ecosystem-analytics.html

What are ways to create and manage data shares in Snowflake? (Select TWO)

Options:

Through the Snowflake web interface (Ul)

Through the DATA_SHARE=TRUE parameter

Through SQL commands

Through the enable__share=true parameter

Using the CREATE SHARE AS SELECT * TABLE command

Answer:

A, CExplanation:

Data shares in Snowflake can be created and managed through the Snowflake web interface, which provides a user-friendly graphical interface for various operations. Additionally, SQL commands can be used to perform these tasks programmatically, offering flexibility and automation capabilities123.

Which Snowflake objects track DML changes made to tables, like inserts, updates, and deletes?

Options:

Pipes

Streams

Tasks

Procedures

Answer:

BExplanation:

In Snowflake, Streams are the objects that track Data Manipulation Language (DML) changes made to tables, such as inserts, updates, and deletes. Streams record these changes along with metadata about each change, enabling actions to be taken using the changed data. This process is known as change data capture (CDC)2.

Which of the following objects can be shared through secure data sharing?

Options:

Masking policy

Stored procedure

Task

External table

Answer:

DExplanation:

Secure data sharing in Snowflake allows users to share various objects between Snowflake accounts without physically copying the data, thus not consuming additional storage. Among the options provided, external tables can be shared through secure data sharing. External tables are used to query data directly from files in a stage without loading the data into Snowflake tables, making them suitable for sharing across different Snowflake accounts.

References:

- Snowflake Documentation on Secure Data Sharing

- SnowPro™ Core Certification Companion: Hands-on Preparation and Practice

A user has an application that writes a new Tile to a cloud storage location every 5 minutes.

What would be the MOST efficient way to get the files into Snowflake?

Options:

Create a task that runs a copy into operation from an external stage every 5 minutes

Create a task that puts the files in an internal stage and automate the data loading wizard

Create a task that runs a GET operation to intermittently check for new files

Set up cloud provider notifications on the Tile location and use Snowpipe with auto-ingest

Answer:

DExplanation:

The most efficient way to get files into Snowflake, especially when new files are being written to a cloud storage location at frequent intervals, is to use Snowpipe with auto-ingest. Snowpipe is Snowflake’s continuous data ingestion service that loads data as soon as it becomes available in a cloud storage location. By setting up cloud provider notifications, Snowpipe can be triggered automatically whenever new files are written to the storage location, ensuring that the data is loaded into Snowflake with minimal latency and without the need for manual intervention or scheduling frequent tasks.

References:

- Snowflake Documentation on Snowpipe

- SnowPro® Core Certification Study Guide

What is the minimum Snowflake edition required to create a materialized view?

Options:

Standard Edition

Enterprise Edition

Business Critical Edition

Virtual Private Snowflake Edition

Answer:

BExplanation:

Materialized views in Snowflake are a feature that allows for the pre-computation and storage of query results for faster query performance. This feature is available starting from the Enterprise Edition of Snowflake. It is not available in the Standard Edition, and while it is also available in higher editions like Business Critical and Virtual Private Snowflake, the Enterprise Edition is the minimum requirement.

References:

- Snowflake Documentation on CREATE MATERIALIZED VIEW1.

- Snowflake Documentation on Working with Materialized Views

https://docs.snowflake.com/en/sql-reference/sql/create-materialized-view.html#:~:text=Materialized%20views%20require%20Enterprise%20Edition,upgrading%2C%20please%20contact%20Snowflake%20Support .

In which scenarios would a user have to pay Cloud Services costs? (Select TWO).

Options:

Compute Credits = 50 Credits Cloud Services = 10

Compute Credits = 80 Credits Cloud Services = 5

Compute Credits = 10 Credits Cloud Services = 9

Compute Credits = 120 Credits Cloud Services = 10

Compute Credits = 200 Credits Cloud Services = 26

Answer:

A, EExplanation:

In Snowflake, Cloud Services costs are incurred when the Cloud Services usage exceeds 10% of the compute usage (measured in credits). Therefore, scenarios A and E would result in Cloud Services charges because the Cloud Services usage is more than 10% of the compute credits used.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake’s official documentation on billing and usage1

Which services does the Snowflake Cloud Services layer manage? (Select TWO).

Options:

Compute resources

Query execution

Authentication

Data storage

Metadata

Answer:

C, EExplanation:

The Snowflake Cloud Services layer manages a variety of services that are crucial for the operation of the Snowflake platform. Among these services, Authentication and Metadata management are key components. Authentication is essential for controlling access to the Snowflake environment, ensuring that only authorized users can perform actions within the platform. Metadata management involves handling all the metadata related to objects within Snowflake, such as tables, views, and databases, which is vital for the organization and retrieval of data.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation12

https://docs.snowflake.com/en/user-guide/intro-key-concepts.html

Which semi-structured file formats are supported when unloading data from a table? (Select TWO).

Options:

ORC

XML

Avro

Parquet

JSON

Answer:

D, EExplanation:

Semi-structured

JSON, Parquet

Snowflake supports unloading data in several semi-structured file formats, including Parquet and JSON. These formats allow for efficient storage and querying of semi-structured data, which can be loaded directly into Snowflake tables without requiring a predefined schema12.

https://docs.snowflake.com/en/user-guide/data-unload-prepare.html#:~:text=Supported%20File%20Formats,-The%20following%20file &text=Delimited%20(CSV%2C%20TSV%2C%20etc.)

What tasks can be completed using the copy command? (Select TWO)

Options:

Columns can be aggregated

Columns can be joined with an existing table

Columns can be reordered

Columns can be omitted

Data can be loaded without the need to spin up a virtual warehouse

Answer:

C, DExplanation:

The COPY command in Snowflake allows for the reordering of columns as they are loaded into a table, and it also permits the omission of columns from the source file during the load process. This provides flexibility in handling the schema of the data being ingested. References: [COF-C02] SnowPro Core Certification Exam Study Guide

Which stage type can be altered and dropped?

Options:

Database stage

External stage

Table stage

User stage

Answer:

BExplanation:

External stages can be altered and dropped in Snowflake. An external stage points to an external location, such as an S3 bucket, where data files are stored. Users can modify the stage’s definition or drop it entirely if it’s no longer needed. This is in contrast to table stages, which are tied to specific tables and cannot be altered or dropped independently.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Stages1

True or False: Fail-safe can be disabled within a Snowflake account.

Options:

True

False

Answer:

BExplanation:

Which command is used to unload data from a Snowflake table into a file in a stage?

Options:

COPY INTO

GET

WRITE

EXTRACT INTO

Answer:

AExplanation:

The COPY INTO command is used in Snowflake to unload data from a table into a file in a stage. This command allows for the export of data from Snowflake tables into flat files, which can then be used for further analysis, processing, or storage in external systems.

References:

- Snowflake Documentation on Unloading Data

- Snowflake SnowPro Core: Copy Into Command to Unload Rows to Files in Named Stage

What is a responsibility of Snowflake's virtual warehouses?

Options:

Infrastructure management

Metadata management

Query execution

Query parsing and optimization

Management of the storage layer

Answer:

CExplanation:

The primary responsibility of Snowflake’s virtual warehouses is to execute queries. Virtual warehouses are one of the key components of Snowflake’s architecture, providing the compute power required to perform data processing tasks such as running SQL queries, performing joins, aggregations, and other data manipulations.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Virtual Warehouses1

Which of the following describes how multiple Snowflake accounts in a single organization relate to various cloud providers?

Options:

Each Snowflake account can be hosted in a different cloud vendor and region.

Each Snowflake account must be hosted in a different cloud vendor and region

All Snowflake accounts must be hosted in the same cloud vendor and region

Each Snowflake account can be hosted in a different cloud vendor, but must be in the same region.

Answer:

AExplanation:

Snowflake’s architecture allows for flexibility in account hosting across different cloud vendors and regions. This means that within a single organization, different Snowflake accounts can be set up in various cloud environments, such as AWS, Azure, or GCP, and in different geographical regions. This allows organizations to leverage the global infrastructure of multiple cloud providers and optimize their data storage and computing needs based on regional requirements, data sovereignty laws, and other considerations.

https://docs.snowflake.com/en/user-guide/intro-regions.html

What data is stored in the Snowflake storage layer? (Select TWO).

Options:

Snowflake parameters

Micro-partitions

Query history

Persisted query results

Standard and secure view results

Answer:

B, DExplanation:

The Snowflake storage layer is responsible for storing data in an optimized, compressed, columnar format. This includes micro-partitions, which are the fundamental storage units that contain the actual data stored in Snowflake. Additionally, persisted query results, which are the results of queries that have been materialized and stored for future use, are also kept within this layer. This design allows for efficient data retrieval and management within the Snowflake architecture1.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Key Concepts & Architecture | Snowflake Documentation2

Which of the following Snowflake objects can be shared using a secure share? (Select TWO).

Options:

Materialized views

Sequences

Procedures

Tables

Secure User Defined Functions (UDFs)

Answer:

D, EExplanation:

Secure sharing in Snowflake allows users to share specific objects with other Snowflake accounts without physically copying the data, thus not consuming additional storage. Tables and Secure User Defined Functions (UDFs) are among the objects that can be shared using this feature. Materialized views, sequences, and procedures are not shareable objects in Snowflake.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Secure Data Sharing1

What is the MOST performant file format for loading data in Snowflake?

Options:

CSV (Unzipped)

Parquet

CSV (Gzipped)

ORC

Answer:

BExplanation:

Parquet is a columnar storage file format that is optimized for performance in Snowflake. It is designed to be efficient for both storage and query performance, particularly for complex queries on large datasets. Parquet files support efficient compression and encoding schemes, which can lead to significant savings in storage and speed in query processing, making it the most performant file format for loading data into Snowflake.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Data Loading1

Which of the following are valid methods for authenticating users for access into Snowflake? (Select THREE)

Options:

SCIM

Federated authentication

TLS 1.2

Key-pair authentication

OAuth

OCSP authentication

Answer:

B, D, EExplanation:

Snowflake supports several methods for authenticating users, including federated authentication, key-pair authentication, and OAuth. Federated authentication allows users to authenticate using their organization’s identity provider. Key-pair authentication uses a public-private key pair for secure login, and OAuth is an open standard for access delegation commonly used for token-based authentication. References: Authentication policies | Snowflake Documentation, Authenticating to the server | Snowflake Documentation, External API authentication and secrets | Snowflake Documentation.

What are two ways to create and manage Data Shares in Snowflake? (Choose two.)

Options:

Via the Snowflake Web Interface (Ul)

Via the data_share=true parameter

Via SQL commands

Via Virtual Warehouses

Answer:

A, CExplanation:

In Snowflake, Data Shares can be created and managed in two primary ways:

- Via the Snowflake Web Interface (UI): Users can create and manage shares through the graphical interface provided by Snowflake, which allows for a user-friendly experience.

- Via SQL commands: Snowflake also allows the creation and management of shares using SQL commands. This method is more suited for users who prefer scripting or need to automate the process.

Which account__usage views are used to evaluate the details of dynamic data masking? (Select TWO)

Options:

ROLES

POLICY_REFERENCES

QUERY_HISTORY

RESOURCE_MONIT ORS

ACCESS_HISTORY

Answer:

B, EExplanation:

To evaluate the details of dynamic data masking, the POLICY_REFERENCES and ACCESS_HISTORY views in the account_usage schema are used. The POLICY_REFERENCES view provides information about the objects to which a masking policy is applied, and the ACCESS_HISTORY view contains details about access to the masked data, which can be used to audit and verify the application of dynamic data masking policies.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Dynamic Data Masking1

What transformations are supported in a CREATE PIPE ... AS COPY ... FROM (....) statement? (Select TWO.)

Options:

Data can be filtered by an optional where clause

Incoming data can be joined with other tables

Columns can be reordered

Columns can be omitted

Row level access can be defined

Answer:

A, DExplanation:

In a CREATE PIPE ... AS COPY ... FROM (....) statement, the supported transformations include filtering data using an optional WHERE clause and omitting columns. The WHERE clause allows for the specification of conditions to filter the data that is being loaded, ensuring only relevant data is inserted into the table. Omitting columns enables the exclusion of certain columns from the data load, which can be useful when the incoming data contains more columns than are needed for the target table.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Simple Transformations During a Load1

A virtual warehouse's auto-suspend and auto-resume settings apply to which of the following?

Options:

The primary cluster in the virtual warehouse

The entire virtual warehouse

The database in which the virtual warehouse resides

The Queries currently being run on the virtual warehouse

Answer:

BExplanation:

The auto-suspend and auto-resume settings in Snowflake apply to the entire virtual warehouse. These settings allow the warehouse to automatically suspend when it’s not in use, helping to save on compute costs. When queries or tasks are submitted to the warehouse, it can automatically resume operation. This functionality is designed to optimize resource usage and cost-efficiency.

References:

- SnowPro Core Certification Exam Study Guide (as of 2021)

- Snowflake documentation on virtual warehouses and their settings (as of 2021)

Which cache type is used to cache data output from SQL queries?

Options:

Metadata cache

Result cache

Remote cache

Local file cache

Answer:

BExplanation:

The Result cache is used in Snowflake to cache the data output from SQL queries. This feature is designed to improve performance by storing the results of queries for a period of time. When the same or similar query is executed again, Snowflake can retrieve the result from this cache instead of re-computing the result, which saves time and computational resources.

References:

- Snowflake Documentation on Query Results Cache

- SnowPro® Core Certification Study Guide

What happens to the underlying table data when a CLUSTER BY clause is added to a Snowflake table?

Options:

Data is hashed by the cluster key to facilitate fast searches for common data values

Larger micro-partitions are created for common data values to reduce the number of partitions that must be scanned

Smaller micro-partitions are created for common data values to allow for more parallelism

Data may be colocated by the cluster key within the micro-partitions to improve pruning performance

Answer:

DExplanation:

When a CLUSTER BY clause is added to a Snowflake table, it specifies one or more columns to organize the data within the table’s micro-partitions. This clustering aims to colocate data with similar values in the same or adjacent micro-partitions. By doing so, it enhances the efficiency of query pruning, where the Snowflake query optimizer can skip over irrelevant micro-partitions that do not contain the data relevant to the query, thereby improving performance.

References:

- Snowflake Documentation on Clustering Keys & Clustered Tables1.

- Community discussions on how source data’s ordering affects a table with a cluster key

Which copy INTO command outputs the data into one file?

Options:

SINGLE=TRUE

MAX_FILE_NUMBER=1

FILE_NUMBER=1

MULTIPLE=FAISE

Answer:

BExplanation:

The COPY INTO command in Snowflake can be configured to output data into a single file by setting the MAX_FILE_NUMBER option to 1. This option limits the number of files generated by the command, ensuring that only one file is created regardless of the amount of data being exported.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Data Unloading

Where would a Snowflake user find information about query activity from 90 days ago?

Options:

account__usage . query history view

account__usage.query__history__archive View

information__schema . cruery_history view

information__schema - query history_by_ses s i on view

Answer:

BExplanation:

To find information about query activity from 90 days ago, a Snowflake user should use the account_usage.query_history_archive view. This view is designed to provide access to historical query data beyond the default 14-day retention period found in the standard query_history view. It allows users to analyze and audit past query activities for up to 365 days after the date of execution, which includes the 90-day period mentioned.

References:

- [COF-C02] SnowPro Core Certification Exam Study Guide

- Snowflake Documentation on Account Usage Schema1

What is the minimum Snowflake Edition that supports secure storage of Protected Health Information (PHI) data?

Options:

Standard Edition

Enterprise Edition

Business Critical Edition

Virtual Private Snowflake Edition

Answer:

CExplanation:

The minimum Snowflake Edition that supports secure storage of Protected Health Information (PHI) data is the Business Critical Edition. This edition offers enhanced security features necessary for compliance with regulations such as HIPAA and HITRUST CSF4.

How can a Snowflake user traverse semi-structured data?

Options:

Insert a colon (:) between the VARIANT column name and any first-level element.

Insert a colon (:) between the VARIANT column name and any second-level element. C. Insert a double colon (: :) between the VARIANT column name and any first-level element.

Insert a double colon (: :) between the VARIANT column name and any second-level element.

Answer:

AExplanation:

To traverse semi-structured data in Snowflake, a user can insert a colon (:) between the VARIANT column name and any first-level element. This path syntax is used to retrieve elements in a VARIANT column4.

Which data types can be used in Snowflake to store semi-structured data? (Select TWO)

Options:

ARRAY

BLOB

CLOB

JSON

VARIANT

Answer:

A, EExplanation:

Snowflake supports the storage of semi-structured data using the ARRAY and VARIANT data types. The ARRAY data type can directly contain VARIANT, and thus indirectly contain any other data type, including itself. The VARIANT data type can store a value of any other type, including OBJECT and ARRAY, and is often used to represent semi-structured data formats like JSON, Avro, ORC, Parquet, or XML34.

References: [COF-C02] SnowPro Core Certification Exam Study Guide

How can a Snowflake user validate data that is unloaded using the COPY INTO

Options:

Load the data into a CSV file.

Load the data into a relational table.

Use the VALlDATlON_MODE - SQL statement.

Use the validation mode = return rows statement.

Answer:

CExplanation:

To validate data unloaded using the COPY INTO

When working with a managed access schema, who has the OWNERSHIP privilege of any tables added to the schema?

Options:

The database owner

The object owner

The schema owner

The Snowflake user's role

Answer:

CExplanation:

In a managed access schema, the schema owner retains the OWNERSHIP privilege of any tables added to the schema. This means that while object owners have certain privileges over the objects they create, only the schema owner can manage privilege grants on these objects1.

What is the relationship between a Query Profile and a virtual warehouse?

Options:

A Query Profile can help users right-size virtual warehouses.

A Query Profile defines the hardware specifications of the virtual warehouse.

A Query Profile can help determine the number of virtual warehouses available.

A Query Profile automatically scales the virtual warehouse based on the query complexity.

Answer:

AExplanation:

A Query Profile provides detailed execution information for a query, which can be used to analyze the performance and behavior of queries. This information can help users optimize and right-size their virtual warehouses for better efficiency. References: [COF-C02] SnowPro Core Certification Exam Study Guide

What is a characteristic of materialized views in Snowflake?

Options:

Materialized views do not allow joins.

Clones of materialized views can be created directly by the user.

Multiple tables can be joined in the underlying query of a materialized view.

Aggregate functions can be used as window functions in materialized views.

Answer:

CExplanation:

One of the characteristics of materialized views in Snowflake is that they allow multiple tables to be joined in the underlying query. This enables the pre-computation of complex queries involving joins, which can significantly improve the performance of subsequent queries that access the materialized view4. References: [COF-C02] SnowPro Core Certification Exam Study Guide

What will prevent unauthorized access to a Snowflake account from an unknown source?

Options:

Network policy

End-to-end encryption

Multi-Factor Authentication (MFA)

Role-Based Access Control (RBAC)

Answer:

AExplanation:

A network policy in Snowflake is used to restrict access to the Snowflake account from unauthorized or unknown sources. It allows administrators to specify allowed IP address ranges, thus preventing access from any IP addresses not listed in the policy1.

Which commands can only be executed using SnowSQL? (Select TWO).

Options:

COPY INTO

GET

LIST

PUT

REMOVE

Answer:

C, DExplanation:

The LIST and PUT commands are specific to SnowSQL and cannot be executed in the web interface or other SQL clients. LIST is used to display the contents of a stage, and PUT is used to upload files to a stage. References: [COF-C02] SnowPro Core Certification Exam Study Guide

Which command is used to start configuring Snowflake for Single Sign-On (SSO)?

Options:

CREATE SESSION POLICY

CREATE NETWORK RULE

CREATE SECURITY INTEGRATION

CREATE PASSWORD POLICY

Answer:

CExplanation:

To start configuring Snowflake for Single Sign-On (SSO), the CREATE SECURITY INTEGRATION command is used. This command sets up a security integration object in Snowflake, which is necessary for enabling SSO with external identity providers using SAML 2.01.

References: [COF-C02] SnowPro Core Certification Exam Study Guide

A tag object has been assigned to a table (TABLE_A) in a schema within a Snowflake database.

Which CREATE object statement will automatically assign the TABLE_A tag to a target object?

Options:

CREATE TABLE

CREATE VIEW

CREATE TABLE

CREATE MATERIALIZED VIEW

Answer:

CExplanation:

When a tag object is assigned to a table, using the statement CREATE TABLE <table_name> AS SELECT * FROM TABLE_A will automatically assign the TABLE_A tag to the newly created table2.

What is the purpose of a Query Profile?

Options:

To profile how many times a particular query was executed and analyze its u^age statistics over time.

To profile a particular query to understand the mechanics of the query, its behavior, and performance.

To profile the user and/or executing role of a query and all privileges and policies applied on the objects within the query.

To profile which queries are running in each warehouse and identify proper warehouse utilization and sizing for better performance and cost balancing.

Answer:

BExplanation: